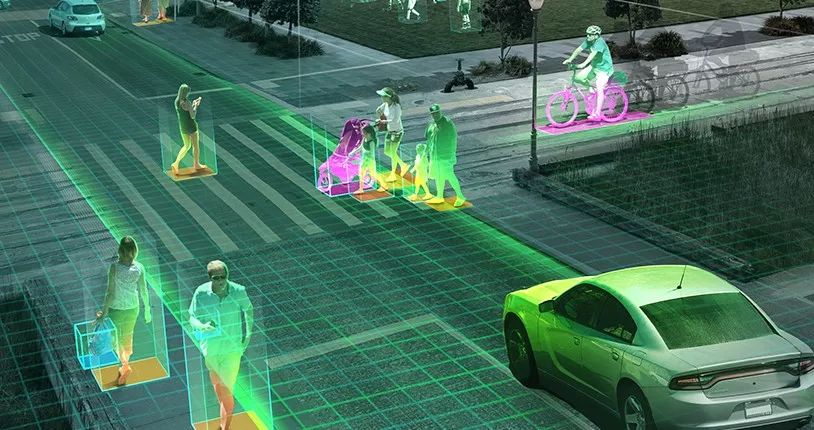

About 75% of human information from the outside world comes from the visual system, and it is particularly prominent in driving behavior. 90% of the information needed by the driver to drive is from the visual. In this way, visual perception becomes an important part of the automatic driving perception system.

Compared to sensors such as ultrasonic radars, millimeter-wave radars, and laser radars, cameras have been born for a long time. However, based on the perception technology of cameras, the rise has not been for many years.

CV (Compute Vision) computer vision or machine vision is a subject that acquires the information required by the image. In actual applications, it will acquire the picture or video information through the hardware camera, and it needs to go through the internal ISP and DSP. Processing, in order to get a clearer image, then use the deep learning algorithm to analyze and process the obtained image information, and finally get the digital or symbol information from the real world through image mapping, so that the machine can understand the real world.

In this process, the core technology involved is the image analysis, processing, and engineering applications. The visual chip plays a central role in processing.

Thanks to CV's ability in recognition, motion analysis, scene reconstruction, and image restoration, it is widely used in security, drone, autopilot and other fields.

In automatic driving, CV can not only identify obstacles (pedestrians, vehicles, etc.), road conditions, but also can be used to build maps. Compared with the traditional consumption field, the use environment of the car is more complicated and harsher, so the application of CV in the field of automatic driving has just started. As the most critical part of the entire visual perception technology, the CV chip is still in its early stages.

CV & Autopilot

In the application of CV technology in automatic driving, CV technology must have three characteristics: real-time, robust, and practical.

Real-time requirements CV system data processing must be synchronized with the vehicle's high-speed driving;

Robustness is the requirement that intelligent vehicles have different road environments such as highways, intra-city highways, and ordinary roads, as well as complicated road environments such as the width, color, texture, curves, slopes, potholes, obstacles, and traffic flow of the road. Various weather conditions such as sunny, cloudy, rain, snow, and fog all have good adaptability;

Practicality means that smart vehicles can be accepted by ordinary users.

At present, CV is mainly used for path identification and tracking. Compared with other sensors, CV has the advantages of rich information detection, non-contact measurement, and three-dimensional modeling of road environment. However, it has a large amount of data processing, and has problems in system real-time and stability, relying on the development of high-performance computers. Hardware, research new algorithms to solve.

With the rapid development of computer technology and image processing technology, the three-dimensional reconstruction road environment provides powerful information for high-speed intelligent driving of vehicles, and has practical feasibility in the near future.

The basic principle of CV's road recognition is that the CCD image gray value of the road surface environment (white road signs, edges, road color, pits, obstacles, etc.) is different from the image texture and optical flow.

According to this difference, after the image processing can obtain the necessary path image information, such as azimuth deviation, lateral deviation, the location of the vehicle in the road and other information. Combining this information with the vehicle's dynamic equations can form a vehicle control system mathematical model.

Deep learning is widely used in CVs. The main reason is that deep learning algorithms are very versatile. For example, fast RCNN can achieve very good results in face, pedestrian, and general object detection tasks; Strong migration capabilities, such as those learned on ImageNet (object-oriented) can also achieve very good results in scene classification tasks;

Deep learning calculations are mainly convolution and matrix multiplication. For this calculation optimization, all deep learning algorithms can improve performance, so engineering development, optimization, and maintenance costs are low.

CV is used in autopilot and must be reliable, low-power, and powerful. Therefore, CV-based autopilot chips are also used.

CV chip R & D and manufacturing, in addition to the difficulty of the process, the biggest difficulty lies in the design of the chip, the tuning of the algorithm. There are not many companies that have chip design capabilities. Most companies are based on mature IP cores for R&D.

According to industry sources, the development based on external Ip cores is a common practice, but in the process of tuning algorithms and the performance of the chip, it is difficult to maximize the performance of the chip because it does not have bottom-up capabilities. As a result, there will be gaps in various performance indicators, such as power consumption, computing power, and so on.

The international power of CV chip makers

Companies targeting visual chips in the auto-pilot segment include ADI, NXP, TI, Mobileye/ST, Movidius, NEXTCHIP, Ambarella, and Inuitive.

Analog Devices (Analog Devices, Inc.) is a manufacturer of digital signal processing (DSP) chips. The Blackfin processor (BF60X series) is released specifically for ADAS. It features lane departure warning, traffic signal recognition, intelligent headlamp control, and object detection/classification. , pedestrian detection and other functions.

The low-end system is based on the BF592, which implements the LDW function; the mid-end system is based on the BF53x/BF54x/BF561 and implements functions such as LDW (vehicle departure warning system)/HBLB/TSR (Traffic Sign Recognition road traffic identification system); and the high-end system is based on the BF60x. LDW/HBLB (Intelligent High Beam Control)/TSR/FCW (Forward Collision Warning System)/PD (Vehicle Detection) functions. The integrated vision preprocessor can significantly reduce the burden on the processor, thereby reducing the processor's performance requirements.

The NXP S32V234 is the ADAS processing chip introduced in 2015 in the NXP S32V series, responsible for visual data processing, multi-sensor fusion data processing, and machine learning on the BlueBox platform.

The chip has a CPU (4 ARM Cortex A53 and 1 M4), 3D GPU (GC3000) and a visual acceleration unit (2 APEX-2 vision accelerators). It can support 4 cameras at the same time, and the GPU can be modeled in real time in 3D. 50GFLOPs. The S32V234 incorporates various security mechanisms such as ECC (error checking and correction), FCCU (fault collection and control unit), and M/L BIST (memory/logic built-in self-test) at the time of design to meet the requirements of ISO26262 ASIL B~C. demand.

TDA SoC series from Texas Instruments Inc., including TDA2x, TDA3x, and TDA2Eco, including TDA3x series support for lane maintenance, adaptive cruise control, traffic sign recognition, pedestrian and object detection, front collision prevention and reverse collision prevention And other kinds of ADAS algorithm.

These algorithms are critical for the effective use of many ADAS applications such as front-facing cameras, full-vehicle viewing, fusion, radar, and smart rear-facing cameras. In addition, the TDA3x processor family can also help customers develop ADAS applications that comply with NCAP procedures such as autonomous emergency braking (AEB) for pedestrians and vehicles, forward collision warning, and vehicle maintenance assistance.

Although Mobileye is not a chip maker, it agreed with Law Semiconductor (ST) to produce the well-known EyeQ series chips for autonomous driving.

Its most advanced EyeQ5 is equipped with 8 multi-threaded CPU cores, equipped with 18 Mobileye's next-generation visual processors. In comparison, the EyeQ4 as the previous generation of visual SoC chips only configured four CPU cores and six Vector Microcode Processors (VMPs). The EyeQ5 supports up to 20 external sensors (cameras, radars, or lidars), while the EyeQ4 can only handle up to 8 sensors.

NEXTCHIP (Korea) is an image processing technology-based company, including video surveillance, DVR, SOC, the core chip in the autopilot system, all of which are semiconductor chip manufacturers based on graphics processing and transmission.

The company's involvement in CV chips is in autopilot applications. Its flagship product, APACHE4, is the SOC chip aimed at the next generation of ADAS systems. APACHE4 incorporates a dedicated inspection engine that supports four types of monitoring: pedestrian detection, vehicle detection, lane detection, and moving object detection. The embedded CEVA-XM4 image and vision platform allows APACHE4 customers to use advanced software programming to develop differentiated ADAS applications.

Aba has been a technology leader in the high-definition video industry, providing solutions for low-power, high-definition video compression and image processing. Its applications include security, unmanned aerial vehicles, and vehicle systems.

In 2015, Anba purchased VisLab, a start-up Italian autopilot company, for 30 million US dollars, and began to force the field of automatic driving.

From 2017 to now, Ambarna has successively released CV1 and CV2 series chips for ADAS. CV1 and CV2 provide monocular and stereo vision processing on the same chip. CV1 can perform computer vision processing on videos with a resolution of up to 4K. CV2 The deep neural network performance is 20 times that of CV1.

Inuitive is an advanced 3D computer vision and image processor design manufacturer that utilizes the licensing of the CEVA-XM4 intelligent vision DSP to run complex real-time depth sensing, feature tracking, target recognition, deep learning, and other mobile devices. Target's visual correlation algorithm.

CEVA image and vision DSPs meet the extreme processing needs of the most complex computational photography and computer vision applications, such as video analytics, augmented reality, and advanced driver assistance systems (ADAS).

The Inuitive vision processor NU3000 provides stereo vision with the third-generation CEVA-MM3101 image and vision DSP. Now part of the Google Project Tango ecosystem, developers can use it to develop instant depth generation, mapping, positioning, Navigation and other complex signal processing algorithms.

Internationally renowned automotive electronics IC manufacturers have all released CV chips for autopilot, but the main application areas are still at lower levels of ADAS.

According to industry insiders, when chip companies introduce a chip, they pay special attention to the market and timing, because each chip's R&D needs to invest tens of millions of US dollars, and the cost recovery basically depends on KK-level shipments.

If the timing does not arrive and the market is not yet mature, even if the performance and quality of the chip can meet the requirements, it cannot be sold on a large scale and it will still affect the company’s plans and even delay the company.

Therefore, in the face of the wave of autopilot, most of the traditional automotive chip giants are only cautiously pushing ADAS-class chips. They are accumulating higher-level technologies internally, and they can even meet certain levels of computational power, but they do not Blindly introduce higher-level chips.

Still at the early stage of automated driving CV chip market

In recent years, many domestic startups have also launched CV chips, such as Horizon, Shenzhen Jianmei, Cambrian, and Xijing.

Horizon released a post-installation journey of 1.0 in 2017. The journey can achieve a power of 1Tflops at a power consumption of 1.5W, processing 30 frames of 4K video per second, and identifying more than 200 objects in the image, enabling FCW/LDW to be realized. / JACC and other high-level driver assistance functions to meet L2's calculation needs.

The planned journey 2.0 will support the simultaneous access of 4-6 cameras; support for the detection of vehicles, pedestrians, lane lines, and travelable areas; support traffic signs, including traffic signs, road signs, ground signs, traffic text, and symbols Detection and identification; support for general obstacle detection, ground defect detection; journey 3.0 will support simultaneous access and multi-sensor fusion of up to 8 cameras.

The Cambrian released the IP Cameronian 1M for smart driving in 2017. CEO Chen Tianshi said that its performance will be more than 10 times that of the Cambrian 1A. It is highly integrated and has a higher ratio of performance to power consumption. The goal is to make all Chinese cars use domestically-made smart processors.

The current indicators of domestic CV chips still only stay at the lower post-installation level. Each company also hopes to develop chips that meet the regulatory requirements in the next few years.

However, from the post-installation to the pre-installation, the chip must comply with not only the requirements of the vehicle regulations, but also the OEM's doubt that the company does not have any large-scale pre-installation mass production experience.

According to industry sources, domestic OEMs are already actively deploying next-generation models, and vision-based ADAS functions are more or less included in product planning.

For the selection of CV solutions, OEMs are more inclined to have some manufacturers with mass production experience. The power, power consumption, and price are all considered. OEMs will not blindly add CVS chips because they want to add ADAS functions. If the original traditional chips can meet the computational needs of the corresponding functions, OEMs will also tend to use the previous generation of products.

It is not easy to make a CV chip that meets the vehicle regulatory level, even for a manufacturer that originally had a conventional car chip. The CV chip market is still at an early stage, and giants in the traditional field are still exploring the stage of promotion. It is difficult for start-up companies to enter directly.

In addition, chip power consumption and price under the same performance will become the key to competition among various vendors.

Suizhou simi intelligent technology development co., LTD , https://www.msmvape.com