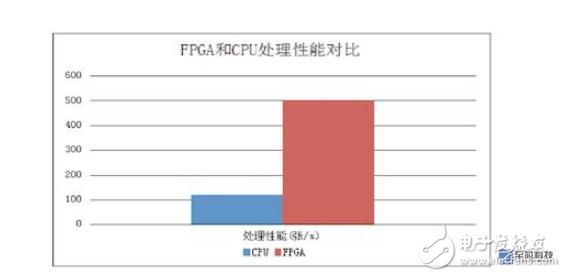

On January 20, 2017, Tencent Cloud launched the first high-performance heterogeneous computing infrastructure in China, the FPGA cloud service, and utilized cloud services to extend the use of FPGA services that can only be used by large companies for long-term payment to more enterprises. Enterprises can program FPGA hardware through an FPGA cloud server, which can increase performance to more than 30 times that of general-purpose CPU servers. At the same time, FPGAs are characterized by hardware-programmable, low-power, and low-latency compared to representative GPUs that are already well-accepted for high-performance computing and represent the future of high-performance computing.

In the field of fiery deep learning in artificial intelligence (AI), enterprises can also use FPGAs for the detection phase of deep learning, complementing the GPUs used mainly for the training phase, and FPGAs can also be used for financial analysis and image video processing. Areas such as genomics that require high-performance computing are the best choices for this type of industry application that requires high efficiency.

Based on this, InfoQ interviewed the Tencent Cloud FPGA team composed of Tencent Cloud-based Product Center and Tencent Architecture Platform to introduce readers to the basic principles, design intentions, application scenarios, and the value it brings to the industry.

Tencent cloud FPGA development history and team strength behind

As the chip process approaches the theoretical limit, it can be predicted that the general-purpose processor (CPU) performance improvement space is more and more limited. Tencent’s own business, with the rapid growth of the mobile Internet, has seen a dramatic increase in data volume, accompanied by a rapid increase in the demand for these data. Tencent began to consider how to solve the growth of computing requirements in 2013, and FPGA as a programmable acceleration hardware has entered everyone's field of vision. With the idea of ​​solving computational needs, you need to verify the actual capabilities of the FPGA through practice.

Tencent's QQ and WeChat services generate hundreds of millions of pictures per day for users. Commonly used picture formats include JPEG format and WebP format. WebP picture format is 30% smaller than JPEG picture format storage space. In order to save storage space, reduce transmission traffic, and enhance the user's picture download experience, WebP format is commonly used for storage, transmission, and distribution. The computational cost of image transcoding requires tens of thousands of CPUs to support the system. The first entry point for natural FPGA development landing is the image transcoding: the JPEG image format into WebP image format.

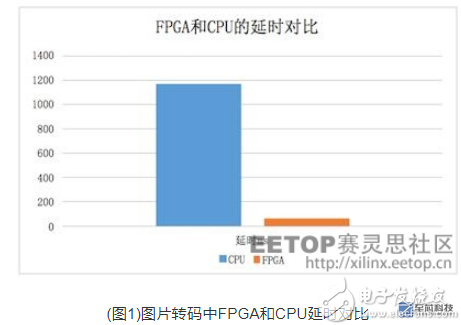

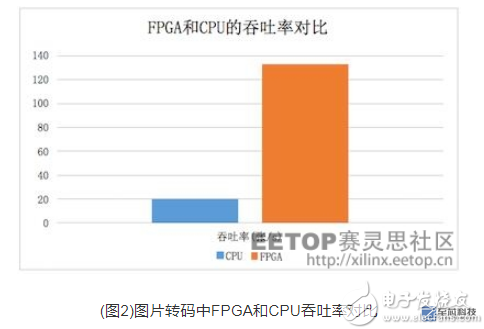

In the practice of image transcoding, the FPGA joint team achieved FPGA processing latency 20 times lower than the CPU, FPGA processing performance is 6 times that of the CPU machine, verifying the ability of the FPGA to accelerate the calculation, and also enhance the FPGA joint The team's confidence.

After the picture transcoding project is completed, deep learning is reflected in the eyes of the FPGA joint team. On the one hand, deep learning requires intensive calculations. On the other hand, deep learning has great commercial value in future applications. Deep learning is based on deep neural network theory. The neural network used for image classification is one of the branches: Convolutional Neural Network (CNN). The team used FPGAs to accelerate CNN calculations, enhance offensive picture detection capabilities, and ultimately achieved a 4x performance of FPGA processing performance in deep learning practices.

The results of Tencent Cloud's FPGA project implementation have witnessed that FPGAs can provide powerful computing power and sufficient flexibility in the data center to meet the challenge of data center hardware acceleration. After the previous FPGA practice, the FPGA joint team obtained the experience of using FPGA in the data center. In the future, it will further explore in the three directions of data center computing, network, and storage, and reconstruct the data center infrastructure.

The data center business in the cloud is changing with each passing day, and a high-performance, highly flexible underlying hardware structure is also needed. Therefore, the FPGA collaboration team is opening the FPGA computing service in the cloud to accelerate the application of cloud computing in various scenarios from the hardware level and reduce the use threshold of enterprises. And costs.

FPGA features analysis

In March 2016, Intel announced the official decommissioning of the “Tick-Tock†processor R&D model. The future R&D cycle will change from two years to three years. At this point, Moore's Law is almost ineffective against Intel. On the one hand, processor performance can no longer grow in accordance with Moore's Law. On the other hand, data growth requires more computational performance than the rate of growth according to "Moore's Law."

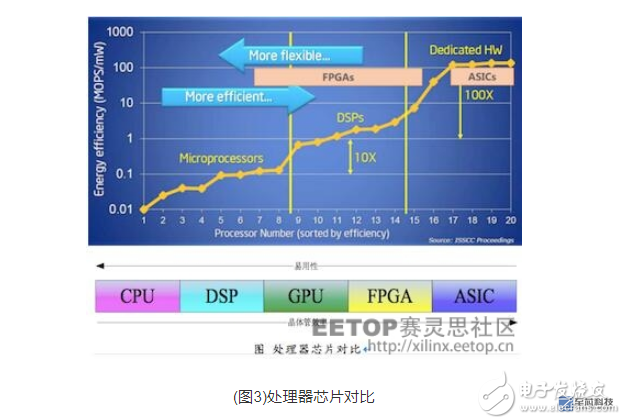

The CPU itself cannot meet the performance requirements of HPC applications, leading to a gap between demand and performance. Before a breakthrough in new chip materials and other basic technologies, an effective solution is to use a special co-processor for heterogeneous computing to improve processing performance. Existing coprocessors mainly include FPGAs, GPUs and ASICs. Because of its unique architecture, FPGAs have advantages that other processors cannot match.

FPGA (Field Programmable Gate Array) Field Programmable Gate Array can reconfigure the internal resources of the chip to form different functional hardware through software, just like the aircraft carrier or Transformers can be built with Lego bricks. Therefore, FPGAs not only have the programmability and flexibility of software, but also have the high throughput and low latency of ASICs. Moreover, due to the rich IO, FPGA is also very suitable as a protocol and interface conversion chip.

The biggest feature of FPGAs in data centers is high latency and low latency. Resources within the FPGA are all reconfigurable, so it is easy to parallelize data and pipeline, and it is easy to balance between data parallelism and pipeline parallelism. The GPU can only do data parallelism.

Compared with ASIC, FPGA programmability shows great advantages. Nowadays, various algorithms in the data center are constantly changing, and there is not enough stable time for the ASIC to complete long-term development. For example, after a neural network model came out, it began to be made into an ASIC. Perhaps it has not yet been put into production. This neural network model has been replaced by another neural network model. The difference is that FPGAs can balance different business requirements. For example, the machines used to sort the search services during the daytime; in the case of very few requests at night, these FPGAs can be reconfigured as offline data analysis functions, providing services for analyzing offline data.

In addition, because FPGA has abundant interface such as high-speed SERDES, and can control the granularity and operation data that are realized flexibly, so it is very suitable for carrying on the agreement and data format conversion. For example, an FPGA can easily access Ethernet data and perform packet filtering on Ethernet packets.

Differences in design from CPU, GPU, and ASIC

The CPU/GPU belongs to the von Neumann architecture. Task execution requires experience in fetching, decoding, executing, fetching, and writing back. For the CPU to achieve high enough versatility, the control logic of its instruction flow is quite complex. The GPU uses SIMD single-instruction, multiple-stream parallelism, and other methods for computational acceleration.

When the FPGA/ASIC is used, the hardware function module is fixed, and no complicated control logic such as branch judgment is needed, and the number of accesses is greatly reduced. Therefore, it can be 1 to 2 orders of magnitude higher than the CPU in terms of energy efficiency.

The ASIC is a dedicated chip that is specifically tailored to a specific need. Compared with general-purpose chips, ASICs are small in size, low in power consumption, high in computational efficiency, and costly in terms of chip shipments. But the disadvantages are also obvious: the development cycle is long, the algorithm is fixed, and once the algorithm changes it may not be reusable.

The FPGA is the "software and hardware integration" architecture, and the software is the hardware. The basic principle of FPGA is to integrate a large number of digital gates and memories in the chip. Users can define the connection between these gates and memory by burning into the FPGA configuration file, and then obtain different hardware functions.

In terms of development difficulty, ASIC> FPGA> GPU> CPU. At present, the mainstream FPGA development language is hardware description HDL, which requires the developer to have certain related skills. With the advancement of high-level languages ​​such as OPENCL and HLS in the industry, the difficulty and cycle of development of the FPGA will also be improved.

Where is the FPGA deployed and how does it communicate with the CPU?

Tencent Cloud's FPGAs are mainly deployed in data center servers. Tencent Cloud designed the PCIE board by adding FPGA chips, DDR memory, peripheral circuits, and heat sinks. This FPGA board is installed on the main board of the server. Users access the server remotely through the network, develop and debug the FPGA, and use it to accelerate specific services.

Between the FPGA and the CPU is through the PCIE link communication. The CPU integrates a DDR memory controller and a PCIE controller. The PCIE controller, DDR controller, and DMA controller are also implemented within the FPGA chip using programmable logic resources. General communication is divided into three situations:

(1) Instruction Channel The CPU writes instructions to the FPGA chip and reads the status. The CPU accesses the memory or internal bus mounted in the FPGA chip directly through the PCIE.

(2) Data channel When CPU reads and writes DDR data on the FPGA board, the CPU configures the DMA controller in the FPGA chip through PCIE and inputs the source physical address and destination physical address of the data. The DMA controller controls the DDR controller and PCIE controller on the FPGA card and transfers data between the DDR memory on the FPGA card and the DDR memory connected to the CPU.

(3) Notification channel The FPGA sends an interrupt request to the CPU through PCIE. After receiving the interrupt request, the CPU saves the current work site, and then transfers it to the interrupt handler for execution. If necessary, it will close the interrupt execution interrupt handler. After the CPU finishes executing the interrupt handler, the interrupt will be turned on again and then reloaded to the previous job site to continue execution.

The current problem facing the FPGA industry

In the industry, Microsoft uses the FPGA architecture in the data center, Amazon also introduced FPGA computing examples, then it is not to explain the industry's wider use of FPGA? In fact, FPGA is a hardware chip, which itself can not be used directly, also Lack of system software support such as operating systems. For a long time, the FPGA industry can be divided into the following parties in the acceleration direction of data calculation:

1. Chip OEMs: Xilinx and Altera (acquired by Intel) provide FPGA chips for distribution directly to distributors.

2. IP provider: Provides IP for various functions, such as IP access to DDR memory, IP support for PCIE devices, and IP for picture codec. Some common IPs are provided by the chip manufacturer.

3. Integrators: Integrators provide hardware and software support. Because direct users lack hardware design and manufacturing capabilities, they often want integrators to provide mature and complete hardware, complete IP integration, and provide drivers and usage methods for end-user use.

4. User: end user. In the data center area, the general purpose of users is to use FPGAs to speed up calculations.

In the FPGA industry, chip manufacturers do not provide hardware boards that are used directly. This is done by integrators. Due to the small use of hardware boards and the sharing of design and production costs, the price of hardware board cards is often higher than the chip price, and even ten times as much.

IP providers often do not quickly provide available executable files (netlist files) to users because they are afraid of disclosure of title. They need to sign a series of agreements and legal documents, and even some IP providers do not provide users with tests at all. Opportunity. As a result, it is difficult for an end user to obtain a usable hardware board, and it is even more difficult to obtain a hardware board that uses the latest technology chips in time, resulting in that the user cannot quickly verify different IPs and select an IP that is suitable for his own business. In addition, the development of FPGAs uses hardware description languages, lacking the very widely used framework concepts in the software field, leading to long development cycles. In general, the FPGA development cycle is about three times that of software development.

In summary, these issues have determined the cloud's subversion and revolution in the FPGA industry.

Tencent cloud FPGA platform can solve specific problems

Tencent cloud FPGA platform solves some problems in the entire industry of FPGA. FPGA users are relatively few and belong to a relatively closed circle. Problems such as high FPGA development threshold, lack of open source high-quality IP, and high chip prices have been criticized by everyone.

For developers, Tencent Cloud's FPGA platform provides the underlying hardware support platform for the FPGA, which is similar to some of the functions of the operating system and simplifies the developer's access to the underlying general-purpose devices, such as DDR and PCIE, which make the developer more focused. Development of business functions.

IP providers and users in the FPGA industry lack an open trading platform and credit guarantee mechanism. IP trading links are lengthy, prices are not transparent, it is difficult to reach transactions, and after the IP is acquired, hardware platforms need to be built to verify IP performance. These are serious Affecting the process of listing a product often takes months. Tencent Cloud provides the FPGA IP store, and IP developers and IP providers can provide FPGA IP and corresponding test programs for other customers at no cost or for compensation through the FPGA IP store. These IPs are developed and customized based on the standard hardware of Tencent Cloud's FPGA. IP verification and testing can be easily completed on the cloud platform. An IP transaction can be shortened from several months to one day, improving transaction efficiency. It also makes IP transactions more transparent.

For some small companies that want to use low-latency, high-quality computing services, they can use FPGA cloud computing acceleration services, without spending a lot of manpower for high-performance computing development, and can simply put high-performance cloud computing services Integrate into your own network platform to enhance the user experience. For example: low-delay image format conversion, image classification based on deep learning and other services, similar services will be further enriched.

For the school's FPGA teaching, the previous school needed to buy a development board for each student. With Tencent Cloud platform, the cost of purchasing the development board for the school can be saved. Now only need to apply for an FPGA cloud platform account for each student. You can, students can directly log in to study and develop according to the demo. Tencent cloud platform will also provide users with easy-to-learn operation guides and experimental courses. The things the user learns are closer to the actual application scenarios of the company and can be well connected with future work needs.

In addition, the large-capacity FPGA chip is relatively expensive. One important reason is that the FPGA chip lacks a large amount of explosive products, and the Tencent Cloud FPGA platform can aggregate a large number of customers to use Tencent's standard FPGA hardware equipment, which will increase The supply of the FPGA chip also facilitates chip manufacturers to reduce costs and gradually alleviates the problem of expensive FPGA chips.

From these we can see that FPGA cloudization is of great significance, can promote the development of the entire FPGA industry, and bring benefits to all parties in the FPGA industry chain.

FPGA Application Benefits in Internet Business

First, the picture transcoding

With the development of the mobile Internet, users upload more and more pictures every day. The company currently uses QQ photo albums, WeChat, etc. for image transcoding, and most of the image formats used in the business are JPEG format, WebP format, etc. , And the calculation consumption brought by picture transcoding needs tens of thousands of CPUs to support the machine. Therefore, the first application scenario of FPGA in the Internet business is picture transcoding: the JPEG picture format is converted into WebP picture format. The project achieved a 20-fold reduction in FPGA processing latency compared to the CPU, and FPGA processing performance is 6 times that of the CPU.

In order to further increase the compression ratio of the picture, and at the same time with the development of the HEVC high-performance coding standard, HEVC's I-frame picture compression rate and previous coding standards such as WebP/JPEG have greatly improved, HEVC I-frame picture compression rate Compared with WebP, it is about 20~30% higher, and it is more than JPEG. It can reach an average of 50%. Therefore, HEVC is considered from the point of view of download bandwidth saving, background storage cost reduction, and user downloading picture experience. Standards have great advantages. The problem with HEVC is that HEVC has very high computational complexity for frame compression, and the cost of transcoding using the CPU is high, making it difficult to promote it in the business. In order to enhance the picture transcoding capability, Tencent continues to use FPGAs to accelerate picture transcoding.

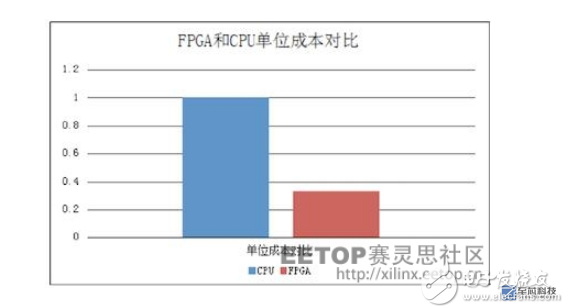

After testing, completed WebP / JPEG format images into HEVC format pictures, test picture size is 1920x1080, FPGA processing delay is 7 times lower than the CPU, FPGA processing performance is 10 times the CPU machine, FPGA unit cost is the CPU machine 1/3 of the model.

Second, picture classification

In recent years, deep learning has played an increasingly important role in speech recognition, picture classification and recognition, and recommendation algorithms. In the era of mobile Internet, Tencent uses FPGAs to accelerate CNN calculations in order to enhance the processing capabilities of image detection and reduce the cost of image detection.

The R&D team used FPGAs to complete the Alexnet model of the CNN algorithm. The FPGA processing performance was 4 times that of the CPU machine, and the unit cost of the FPGA model was 1/3 of the CPU model.

FPGAs can make developers/teams more "willful"

For external developers and development teams, first, Tencent Cloud FPGA provides a unified hardware platform. Developers do not need to pay attention to the FPGA infrastructure, eliminating the problems and challenges faced by repetitive development of hardware platforms, quick deployment, and a completely new FPGA platform can be deployed within minutes. The rich logic resources of the FPGA chip can provide guarantees for the developers to implement the "willfulness". The unified platform also facilitates the development team to quickly and elastically expand the hardware platform, thereby improving the reliability of business disaster recovery. Secondly, Tencent Cloud FPGA provides a complete development environment and does not require specialized personnel to develop a drive environment. Develop language diversity, HLS, OPENCL, RTL to meet the needs of different types of developers, reduce learning and development threshold, easy to use. In addition, Tencent Cloud FPGA provides rich IP capabilities, not only a large number of free IP and paid IP services, but also the transaction process is transparent, safe, and reliable. Accelerating developer development also provides the development team with a platform to trade their own developed IP. Finally, Tencent Cloud FPGA provides professional security protection. Deployed on the cloud, you will enjoy the same basic cloud security protection and high security services as the cloud server. Eliminates the problems associated with traditional FPGA data storage and transmission security.

It can be seen that the problems such as the stability of the hardware platform, the high development language threshold, the long debugging period, and the joint debugging of the driver software faced by the traditional FPGA development will be improved. The developers and the development team can quickly release the complicated and repetitive tasks. Out of it, there is more time and energy devoted to innovative work. This will add more innovation factors and create more value for the entire technological R&D atmosphere.

Future, industry value of FPGA

At present, AI is booming. Thanks to the high-density computing capabilities of FPGAs and the low-power features, FPGAs have been deployed in large-scale online prediction directions for deep learning (advertising recommendation, image recognition, speech recognition, etc.). Users often compare FPGAs with GPUs, and the GPU's ease of programming, high throughput, and low power consumption and ease of deployment of FPGAs can be quite different. Compared to GPUs and ASICs, FPGAs' low latency and programmability are their core competencies.

For the industry, the cloud is a shared service idea. Users do not use hardware and software in a shared way, but rather share and reuse. Therefore, the use cost is greatly reduced, and the use efficiency of resources is improved. FPGA cloud services allow industry participants to gain value:

1. Chip factory: It does not need to go through layers of agents to increase costs, but it can provide hardware board card reuse services through the cloud. Because of hardware unified procurement and maintenance, stability and reliability are greatly improved.

2. IP provider: IP can be put into the market of cloud platform. When the end user uses it, the cloud platform is deployed and delivered. The user does not need to contact the executable file (netlist file), so there is no risk of disclosure of the property right. . This will encourage the IP provider's service methods, which can provide time-based billing, buy-out billing, and even trial version of free, and users can quickly verify.

3. Design and development: The cloud provides a framework approach, encapsulates commonly used system-level operations (DDR memory access, DMA, PCIE device control, etc.), can support hardware description languages, and also supports OPENCL and C-like high-level languages. Provides a common driver and call library that does not require user programming. For high-end users, they can also use OPENCL or hardware description language to achieve their own functions.

4. The initial application scenario for FPGAs is in the communications industry. So what are the changes to the data center infrastructure due to its high communication bandwidth and real-time processing capabilities? Currently, FPGAs can be used in areas where IDC is very powerful, such as low latency. Network architecture, network virtualization, high-performance storage, and network security. Fortunately, we see that Microsoft and Amazon and other peers have used FPGAs to make many positive attempts in their public cloud networks. Tencent Cloud is currently actively exploring and practicing in multiple directions.

Predictably, with the help of FPGAs, our data center will be more green and efficient.

Tactile Feedback Membrane Switch

Tactile membrane switches provide a physical, tactile response when pressed. When interacting with a tactile switch, a user typically presses on a metal or plastic dome beneath the graphic overlay, providing an unmistakable feeling that a [button" is being pressed. Tactile switches can be fitted to a wide variety of shapes and sizes and require different amounts of actuation force in order to fulfill particular functions.

Tactile Feedback Membrane Switch,White Light Membrane Switch,White Icon Membrane Switch,Blue Cable Membrane Switch

Dongguan Nanhuang Industry Co., Ltd , https://www.soushine-nanhuang.com